Open Spotify, and it already knows what you feel like listening to. Instagram serves an ad for something you thought about but never searched. Convenient, almost frictionless, and completely normal in 2026. This is hyper-personalization in digital marketing doing exactly what it was built to do. The question worth asking is: what are we quietly trading away for that convenience?

From mass marketing to a segment of one

Marketing used to run on personas. Not real people, but composite sketches of them. “Sophie, 24, marketing professional, values sustainability.” Whole campaigns were built on Sophie. She did not exist, but she was useful, because if you understood the archetype, you could reach the group. That logic made sense when data was scarce and targeting was blunt.

It does not make much sense anymore. 2025 essentially marked the end of broad demographic targeting as a viable strategy, treating every consumer within a category the same way is now not just inefficient but a strategic mistake in digital marketing. Machine learning processes behavioral signals in real time: what you clicked, how long you hovered, what you ignored. Rajesh Jain calls this the Segment of One, where AI personalization responds to who you are at this exact moment, not who a spreadsheet decided you were six months ago. The persona has not evolved. It has been replaced by something that actually knows you.

Platforms like Netflix and Amazon understood this early. Their recommendation engines are not a feature, they are the product. For marketers, the appeal of hyper-personalization in digital marketing is obvious: relevance drives engagement, and engagement drives everything else.

The mirror problem of AI personalization

However, here is the tension : these AI personalization systems are built entirely on what you have already done. They are extraordinarily good at predicting your next move, but only because they never challenge your existing preferences. The core promise of AI personalization is relevance, but they optimize for what you already like, which sounds great until you realize what that actually means.

Hyper-personalization does not show you the world. It shows you yourself.

Think about the last time an algorithm genuinely surprised you. Not showed you more of what you like, but introduced something you would never have found on your own. An author outside your usual genres. A brand you had no reason to know existed. That kind of discovery, the accidental kind, is quietly disappearing from the consumer experience. The algorithm is not designed for it. Relevance and discovery are not the same thing, and right now, relevance is winning.

The hyper-personalization paradox

This is where it gets uncomfortable.

The more you click, the more it learns. The more it learns, the more it shows you what you already like. The more it shows you what you already like, the less you need to choose. The less you choose, the less you know what you actually want.

A system designed to serve your preferences ends up quietly eroding your ability to form them.

In reality, that is not a UX problem. It is something more fundamental than that. When an algorithm permanently filters what you see, your preferences stop being entirely yours. They become a reflection of what engagement data told a machine about you three months ago. A slightly outdated, flattened version of yourself, served back to you on a loop. This is the filter bubble effect at the individual level: not a political phenomenon, but a personal one, and one of the most underestimated consequences of hyper-personalization on the consumer experience.

Consumer psychology has a name for what gets lost here: human agency. Research into the ethical limits of hyper-personalization shows that when systems anticipate needs so precisely that users never have to actively seek anymore, the boundary between AI personalization and psychological manipulation starts to blur. The algorithm does not just predict what you want. Given enough time, it starts to shape it.

And from a purely strategic standpoint, this should worry marketers too. A consumer whose taste has been entirely outsourced to your platform is not loyal. They are dependent. Dependency and loyalty feel similar until a competitor builds a slightly better filter bubble, and then they vanish.

Decision fatigue: how AI personalization makes us passive

In practice, much of this comes down to a structural problem in digital environments. The sheer volume of available options makes choosing exhausting. AI personalization solves that friction. It narrows the field, surfaces the most likely candidates, and removes the cognitive load of deciding. Research from the Journal of Research in Interactive Marketing found that while AI personalization does simplify product evaluation, it also generates real decision fatigue and raises legitimate questions about consumer autonomy over time.

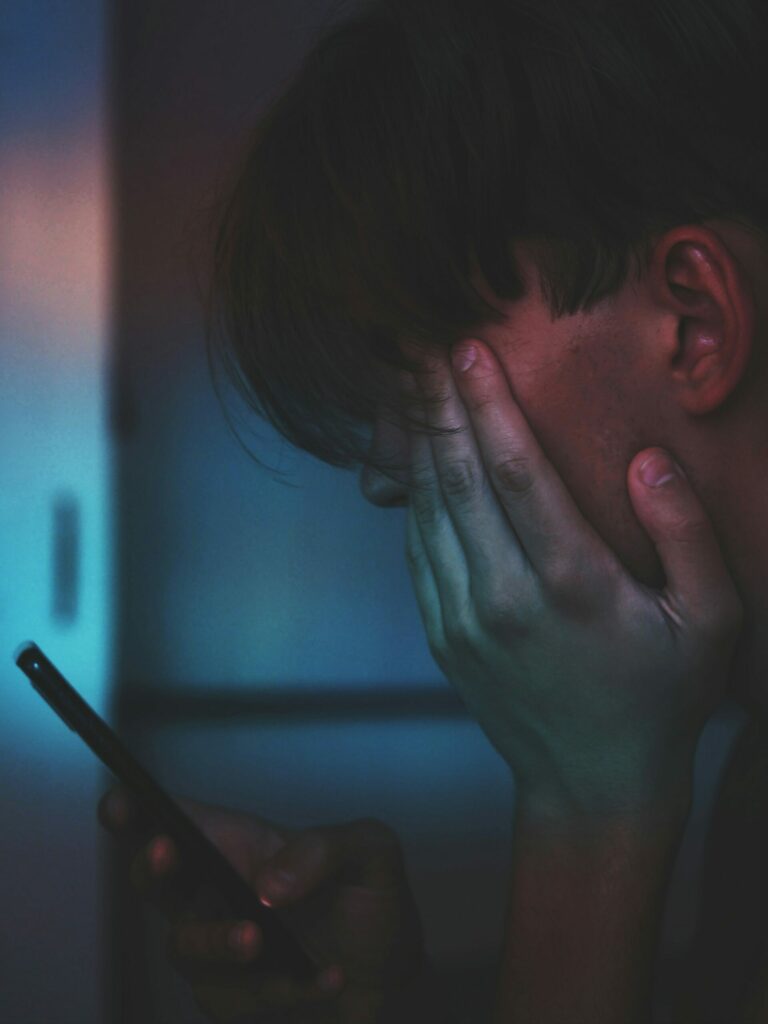

TikTok is the clearest example of where hyper-personalization leads. The infinite scroll removes every natural stopping point. There is no chapter break, no end of page, no moment where the app says that is probably enough. What starts as ten minutes of browsing turns into an hour of doomscrolling, and you were not really choosing any of it. This is what a consumer experience built entirely around retention looks like in practice. We did not build smarter tools. We built tools that made us more comfortable being passive.

Technology was supposed to serve us. Somewhere along the scroll, we started serving it.

The shift from active explorer to passive receiver happens so gradually you barely notice. And once you are used to the AI personalization system deciding, the habit is genuinely hard to break. Taste, curiosity, the impulse to go looking for something new: all of it gets slowly outsourced to a platform optimized for your retention, not your growth.

Filter bubbles and the narrowing of experience

Beyond the individual, the picture gets more concerning. Hyper-personalization in digital marketing produces filter bubbles at scale: environments where everything you encounter has been pre-selected to match your existing worldview. Individualized recommendations create preference loops where consumers see similar products, similar content, similar perspectives, over and over again. This filter bubble effect does not just limit discovery, it actively narrows the consumer experience and drives impulsive purchasing by constantly reinforcing what already appeals to you.

A music platform that only recommends jazz because you listened to jazz last Tuesday is not broadening your world : It is managing your attention.

The more precisely AI personalization maps your past, the more the filter bubble it creates risks becoming the ceiling of your future consumer experience.

What digital marketing should do differently

Abandoning hyper-personalization in digital marketing is not a realistic answer. The efficiency is real, and consumers have come to expect a level of relevance that generic campaigns cannot deliver. But there is a meaningful difference between personalization that serves users and personalization that traps them.

One idea gaining traction is controlled unpredictability: deliberately introducing content slightly outside a user’s established taste, close enough to feel relevant but different enough to actually expand the consumer experience. Transparency is another lever: giving users real visibility into how their data shapes their feed, and genuine control to adjust it. As expectations in digital marketing have shifted from wanting choice to demanding immediate relevance, that kind of transparency is not just ethical, it is a competitive differentiator.

Success metrics need rethinking too. Clicks and conversions measure one thing. Return on Experience (ROE) tries to capture something harder but more important: long-term satisfaction, content diversity, trust. Whether brands actually adopt it is another question, but the conversation is at least starting.

A question of responsibility

Hyper-personalization in digital marketing is not going anywhere. If anything, it will get sharper. The real question is what we decide to optimize it for. When algorithms move from relevance into emotional targeting, exploiting psychological vulnerabilities to trigger purchases, the consumer experience becomes a trap rather than a service. The consumer is no longer being served. They are being steered.

The brands that earn lasting trust will be the ones that treat AI personalization as a tool for the user, not just a mechanism for retention. A healthy consumer experience should expand your world, not confirm it.

Knowing your customer and reducing them to their last ten clicks are not the same thing.

Does AI personalization work? Clearly yes. The more interesting question is who it is actually working for.

References

- Rajesh Jain, 2026, RajeshJain.com, https://rajeshjain.com/2026-ai-and-marketing-predictions/, accessed March 16, 2026

- Bhojwani R., Paul J., Srivastava R., 2026, Journal of Research in Interactive Marketing, Emerald Publishing, https://doi.org/10.1108/JRIM-11-2025-0702, accessed March 9, 2026

- IJFMR, 2025, International Journal For Multidisciplinary Research, https://www.ijfmr.com/papers/2025/5/54845.pdf, accessed March 9, 2026

- IJFMR, September 2025, International Journal For Multidisciplinary Research, https://www.ijfmr.com/papers/2025/6/62777.pdf, accessed March 16, 2026

- The CIO Times, 2026, theciotimes.com, https://theciotimes.com/how-ai-is-reshaping-consumer-psychology-in-2026/, accessed March 18, 2026

- Jamieson Rothwell, June 2025, Medium, https://medium.com/ux-management/beyond-demographics-creating-ai-era-personas-that-include-digital-behavior-patterns-093e4f292621, accessed March 18, 2026